Panic attacks were common. Employees joked about suicide, had sex in the stairwells, and smoked weed on break.

A disturbing investigative report by the Verge last week revealed that some of Facebook’s contract moderators—who are tasked with keeping content like beheadings, bestiality, and racism out of your news feed—have turned to extreme coping mechanisms to get through the workday. Some contractors have been profoundly impacted by the content they’re exposed to, which may have implications for the rest of us who have grown accustomed to scrolling past sketchy links in our news feeds.

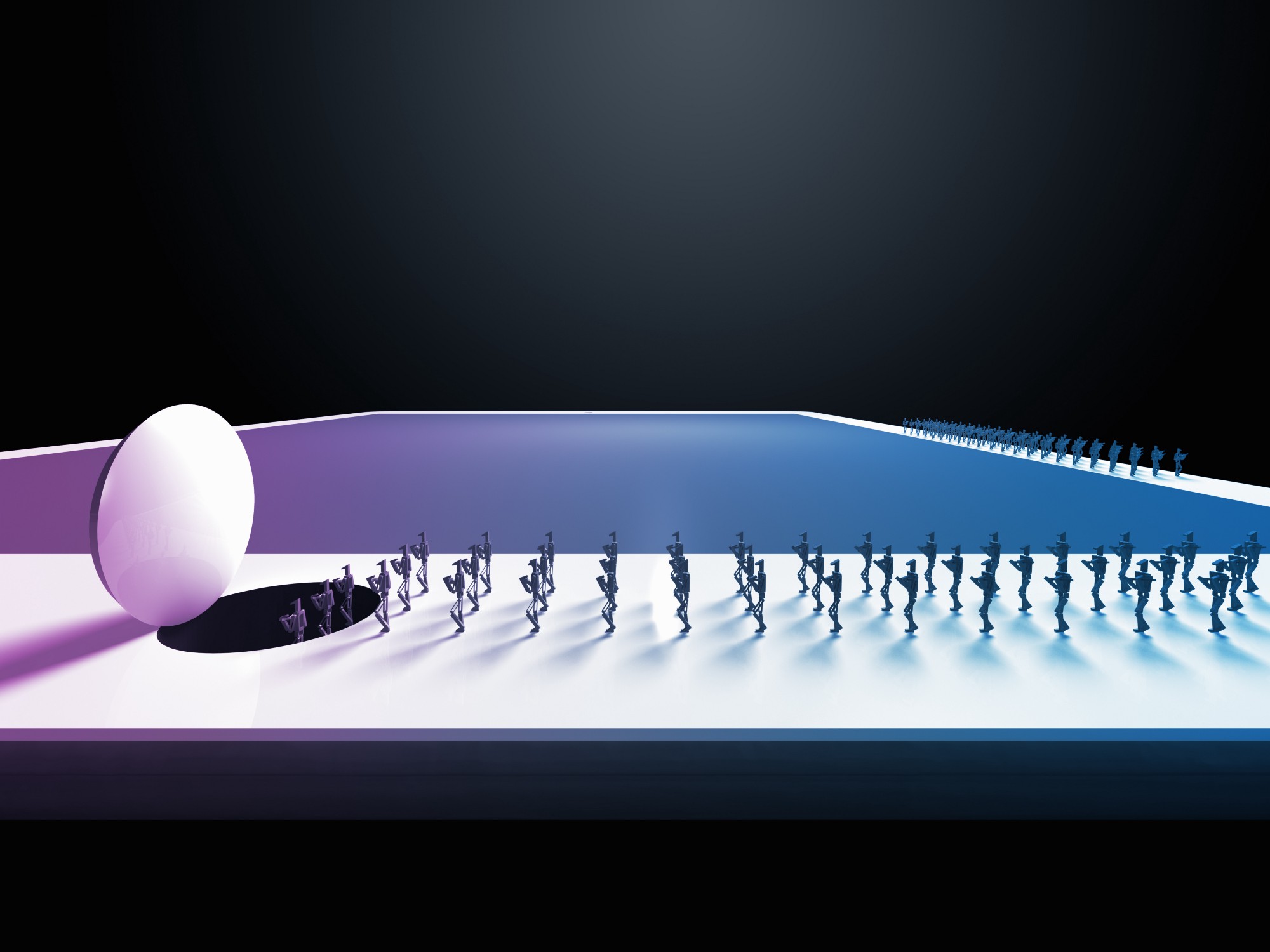

For some workers, repeatedly viewing conspiracy videos and memes became a gateway to embracing conspiracy theories themselves. Contractors told the Verge’s Casey Newton that some of their peers would “embrace fringe views” after days spent combing through conspiracy videos and memes.

“One auditor walks the floor promoting the idea that the Earth is flat,” Newton wrote.

Because most misinformation isn’t banned by the platform’s rules, Facebook generally won’t remove it outright, arguing instead that it should be countered with factual reports. Such efforts have not been totally successful: A recent article in the Guardian found that “search results for groups and pages with information about vaccines were dominated by anti-vaccination propaganda” on the social network.